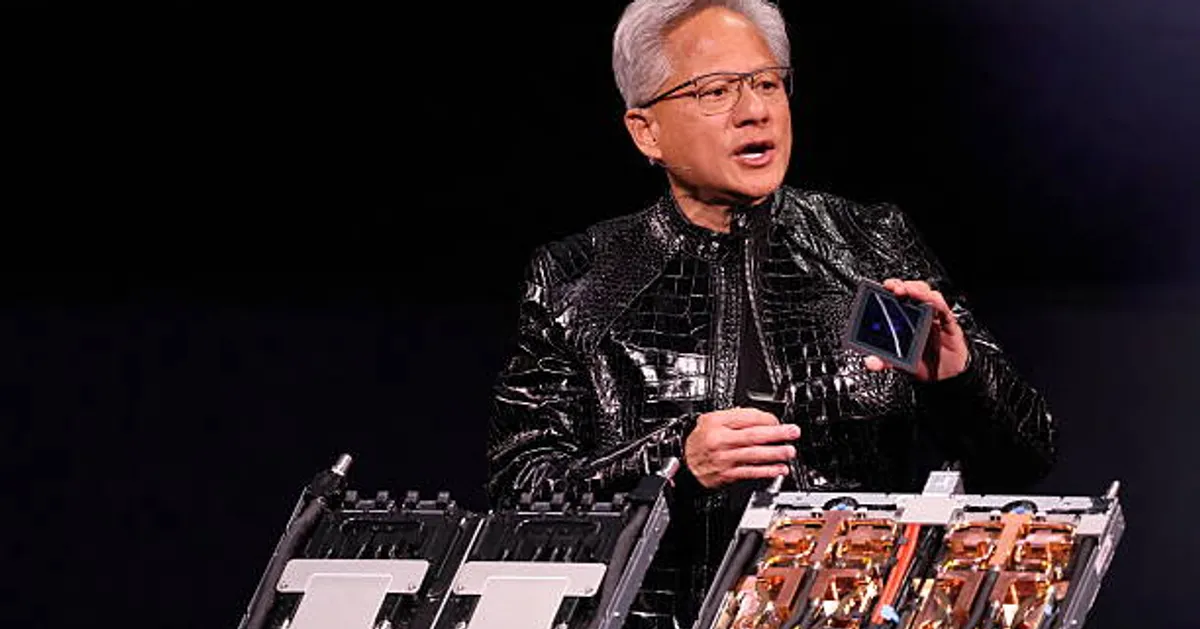

Nvidia CEO Says Next-Generation AI Chips in Full Production as Competition Intensifies

Daniel Okoye

LAS VEGAS, Jan 5 (GeokHub) — Nvidia Chief Executive Jensen Huang said on Monday that the company’s next generation of artificial intelligence chips is now in full production, promising a major leap in performance as competition accelerates across the AI hardware market.

Speaking at the Consumer Electronics Show (CES) in Las Vegas, Huang said the new chips can deliver up to five times more AI computing power than Nvidia’s previous generation when running chatbots and other AI-driven applications. The chips are expected to be released later this year and are already being tested by major AI firms, according to Nvidia executives.

At the center of the rollout is Nvidia’s new Vera Rubin platform, a system built from six distinct chips. The flagship server configuration will contain 72 graphics processing units and 36 central processing units, while larger deployments can be scaled into clusters of more than 1,000 Rubin chips, Huang said.

Huang said the platform could improve the efficiency of generating AI “tokens” — the core units that underpin chatbot responses — by up to tenfold, significantly reducing the computing cost of serving AI models to large user bases.

The performance gains come partly from Nvidia’s use of a proprietary data format, which Huang said the company hopes will be adopted more broadly across the industry.

“This is how we were able to deliver such a gigantic step up in performance, even though we only increased transistor counts by about 1.6 times,” Huang said.

While Nvidia continues to dominate the market for training large AI models, it faces growing pressure in deployment and inference — the process of delivering AI services to end users — from rivals such as Advanced Micro Devices, as well as major customers including Google, which has been developing its own AI chips.

Much of Huang’s CES address focused on improving inference performance. Nvidia unveiled a new feature called context memory storage, designed to help chatbots respond faster and more accurately to long conversations and complex prompts.

The company also introduced a new generation of networking switches using co-packaged optics, a technology aimed at more efficiently linking thousands of machines inside large data centers. The move places Nvidia in direct competition with established networking players.

Nvidia said cloud infrastructure firm CoreWeave will be among the first to deploy Vera Rubin systems, with broader adoption expected from major cloud providers later this year.

Beyond data centers, Huang highlighted new open-source software tools for autonomous vehicles that allow engineers to trace and audit how self-driving systems make decisions. Nvidia said the software, known as Alpamayo, will be released alongside the data used to train it.

“Only by open-sourcing both the models and the training data can you truly trust how those systems were built,” Huang said.

Huang also addressed Nvidia’s recent acquisition of talent and chip technology from AI startup Groq, saying the deal would not disrupt Nvidia’s core business but could lead to expanded product offerings.

Meanwhile, Nvidia continues to navigate geopolitical challenges. Huang said demand remains strong in China for Nvidia’s H200 chips, which predate its current Blackwell architecture. The company has applied for export licenses and is awaiting approval from U.S. and other authorities before shipping additional units.