How We Slashed Our Kubernetes Bill by 60%: A 2025 FinOps Case Study

GeokHub

In 2024, our Kubernetes bill was our fastest-growing line item. We had successfully migrated to the cloud, embraced microservices, and achieved fantastic scalability. But our CFO saw a different story: a runaway train of cloud costs with no clear owner or control.

Our EKS clusters were costing us over $85,000 per month. We knew we were wasting money, but the dynamic nature of Kubernetes made it feel like trying to nail jelly to a wall.

After a three-month FinOps deep dive, we didn’t just trim the fat; we re-architected our financial relationship with our cloud-native infrastructure. We reduced our Kubernetes bill to ~$34,000 per month—a 60% saving—and built a sustainable cost culture in the process.

Here is our 2025 playbook.

The Pre-2025 Reality: A Perfect Storm of Waste

Our initial investigation revealed a classic set of cloud-native inefficiencies:

- The “Set-and-Forget” Pod: Resource requests and limits were either non-existent or based on wild guesses from two years ago, leading to massive over-provisioning.

- The “Always-On” Burden: Evenings, weekends, and holidays—our development and staging environments ran at full production-scale cost, despite zero traffic.

- The “No-Discount” Cluster: We were running almost entirely on On-Demand Instances, paying a huge premium for flexibility we didn’t need.

- Orphaned Storage: Persistent volumes from deleted deployments were accumulating like digital ghosts, costing thousands in unattached storage.

The 2025 FinOps Strategy: Our Four-Pillar Attack

We assembled a cross-functional “Cost Tiger Team” with members from Engineering, Finance, and DevOps. Our mantra was: “Optimize Utilization, Not Just Cost.”

Pillar 1: Intelligent, Automated Right-Sizing with VPA and Goldilocks

Manually calculating CPU and memory requests is a losing game. We automated it.

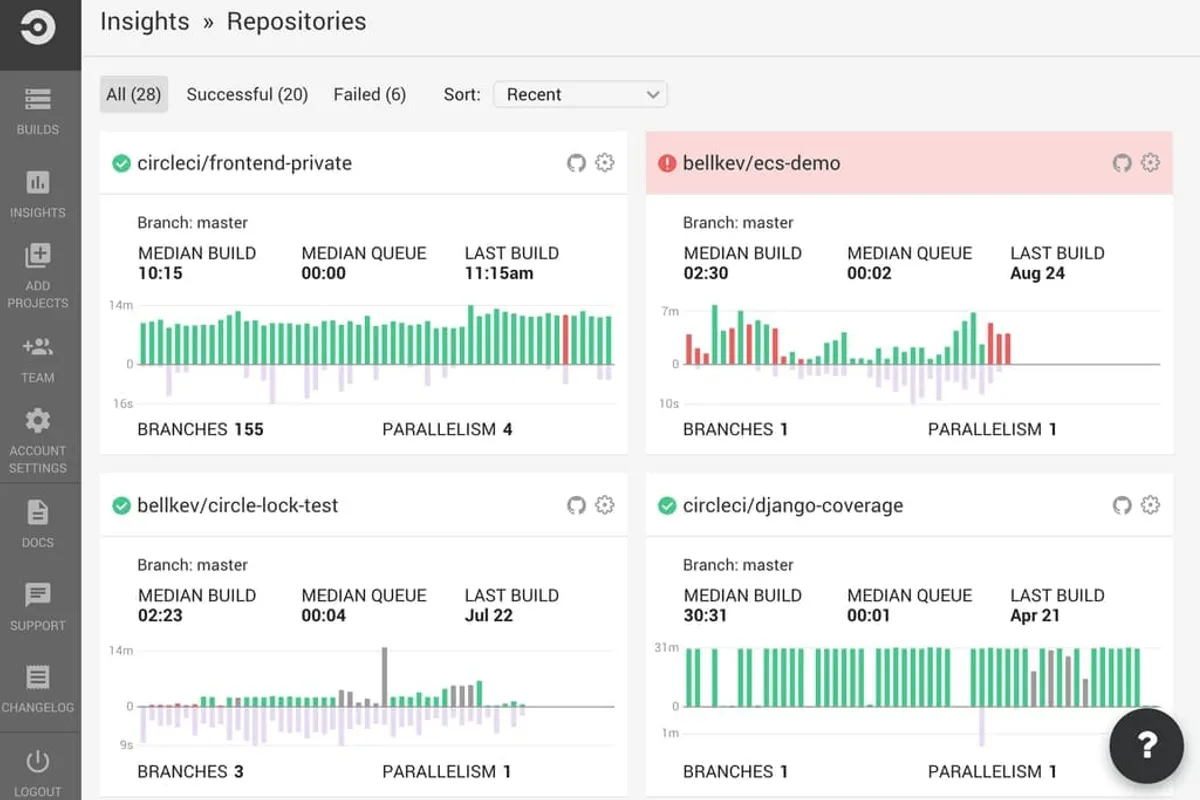

- The Tool: We deployed the Vertical Pod Autoscaler (VPA) in recommendation mode across our non-production clusters. For production, we used Goldilocks, a tool that uses VPA to provide a dashboard of resource recommendations.

- The Action: Instead of guessing, developers now had a data-driven dashboard showing the actual 99th percentile usage of their pods. They updated their resource requests to match, often cutting CPU and memory by 50-70% overnight.

- The 2025 Twist: We integrated these recommendations directly into our CI/CD pipeline using a OpenCost plugin. Pull requests now show a cost differential, prompting developers to right-size their resources before deployment.

Pillar 2: Aggressive Spot Instance Adoption with Intelligent Fallbacks

The 90% discount of Spot Instances is the lowest-hanging fruit in Kubernetes. The fear of evictions was holding us back. We eliminated it.

- The Tool: We migrated our stateless workloads to a managed node group powered by Karpenter.

- The Action: Karpenter’s modern provisioning logic doesn’t just look for the cheapest instance; it analyzes the entire Spot market for the most stable and cost-effective options, diversifying across instance types and availability zones to minimize the impact of a single Spot termination.

- The 2025 Result: Over 80% of our worker nodes now run on Spot Instances. For the few stateful workloads that couldn’t tolerate interruption, we used a mix of On-Demand and newer Savings Plans for Compute, which now offer better flexibility than traditional Reserved Instances.

Pillar 3: The “Schedule-to-Save” Revolution

Why pay for environments that no one is using?

- The Tool: We implemented Kubernetes Downscaler with custom schedules.

- The Action: We tagged all non-production namespaces (e.g.,

dev,staging). Downscaler scales their deployments to zero replicas on weekdays after 7 PM and all weekend. It scales them back up at 7 AM on weekdays. - The 2025 Impact: This single change, with zero engineering effort, cut our non-production cluster costs by 65%. For databases that couldn’t be scaled to zero, we used AWS RDS’s new Pause/Resume feature, achieving similar savings.

Pillar 4: Granular Cost Visibility with OpenCost & GitOps Tagging

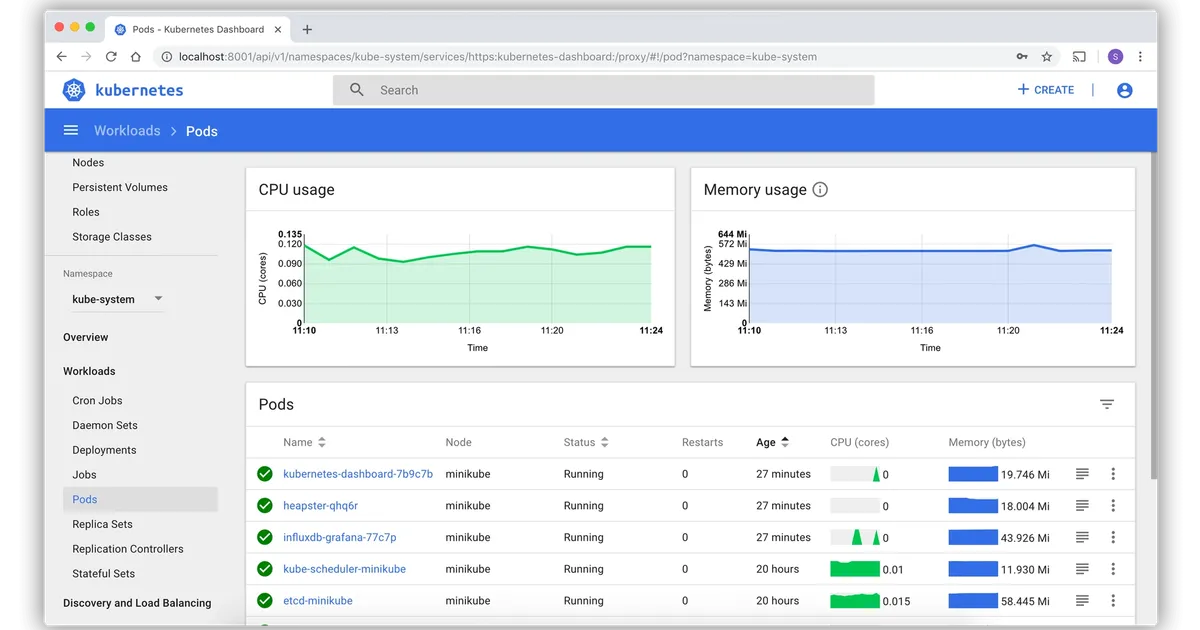

You can’t manage what you can’t measure. We made cost a first-class metric, visible to everyone.

- The Tool: We deployed OpenCost, the CNCF sandbox project that is the open-source standard for Kubernetes cost monitoring. We integrated it with our existing Grafana dashboards.

- The Action: We enforced a strict GitOps tagging policy using Kustomize patches. Every Kubernetes namespace and resource is automatically labeled with

cost-center,team, andproject. This allowed us to show each product team their real-time spend in a shared dashboard. - The Cultural Shift: This was the game-changer. When teams could see the direct cost impact of their code and resource choices, they self-optimized. “Cost-aware development” became a natural part of our engineering culture.

The Results & The Roadmap

After 90 days, the results were undeniable:

- Overall K8s Spend: Reduced from $85,000/mo to ~$34,000/mo (60% savings).

- Spot Adoption: Increased from 5% to 82% of worker nodes.

- Cluster Efficiency: Average node CPU utilization rose from 18% to over 65%.

- Developer Empowerment: Product teams now own and understand their cloud costs.

Your 2025 Action Plan

- Start Today with OpenCost: Deploy OpenCost in your cluster. In one hour, you’ll have a crystal-clear view of where your money is going.

- Pilot Karpenter with Spot: Choose one non-critical workload and migrate it to a Karpenter-provisioned Spot node group. The experience will build confidence.

- Schedule Your Staging: Implement Kubernetes Downscaler on your

stagingenvironment this Friday. Watch your bill drop over the weekend. - Socialize the Data: Share cost dashboards with engineering leads. Make cost a non-blaming, collaborative metric.

Conclusion: FinOps is a Feature, Not a Bug

In 2025, cloud cost management isn’t an ops task; it’s a core engineering competency. The tools have matured, and the practices are proven. By treating cost as a continuous efficiency metric—just like performance or reliability—we transformed our Kubernetes spend from a source of anxiety into a strategic advantage.

The savings we unlocked didn’t just go back to the bottom line; they were reinvested into innovation, funding the very features that let us outpace our competition.